Course Format

Skills Level

Duration

Q&A

Feedback

Lecture Type

Reference collection and analysis | Blockout Model

Establish primary forms | UV map the face | Use ZWrap to project TextureXYZ detail | Process and cleanup TextureXYZ data | Apply detail to head mesh

Bridge sculpt between low Subd and High Frequency Detail | Apply additional details to sculpt (scars, burn marks) | Demo displacement extraction to 32bit EXR format | Apply first pass color textures | Setup initial Maya lookdev scene to test displacements and basic textures

Eyeball modeling, texturing and shaders for realistic eyeballs | Align eyes | Add water line | Finalize head textures and shaders | Demo gloss map creation and additional maps | Exploration of dual lobe specular for skin

XGen in Maya | Curve generation, XGen parameters | Modifiers for noise, clumping | Look at shader parameters and how to approach color and variation

Start in Marvelous and push clothing to 50-70% completion | Move mesh out to Zbrush / Maya to create clean topology and UVs | Use new and clean topology to continue sculpting clothing | Model and UV accessories / gear | Demo different modeling techniques with emphasis on creating clean, organized meshes

Pipeline considerations before sculpting | Sculpt and Detail the character in ZBrush | Extract Displacements in ZBrush | Decimate Meshes for Painter | Assemble Maya Scene | Export to Painter

Blockout basic colors and roughness in Painter | Texture Paint different surface types in Painter

Start to assemble final scene | Gather assets, organize scene | Apply shaders and begin plugging in maps | Cloth shader setup | Leather shader setup | Metallic elements shader setup

Look at different types of lighting techniques | Demo of HDRI based lighting | Demo of Studio Lighting using area lights | Discuss light properties (falloff, light size, light distance) | Discuss cameras and camera properties (depth of field, shutter, aperture) | Final render settings | Output final renders | Post processing in Photoshop / Lightroom | Discuss production techniques and how compositing is used even though this course won’t go into render layers and compositing | Discussion on presentation in a portfolio (what to show, what not to show, breakdowns etc)

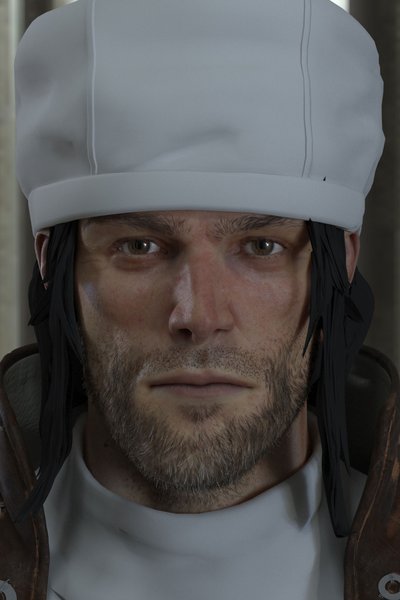

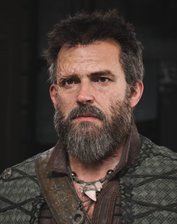

Instructor's Gallery

3x Payments

$332.67

2x Payments

$499

Full payment

$998

Receive personalized feedback on all assignments from the industry’s top professionals.

Enjoy lifetime access to the spectrum of course content, including lectures, live Q&As, and feedback sessions.

Show off your Certification of Completion when you turn in 80% of course assignments.

Learn anywhere, anytime, and at your own pace with flexible, online course scheduling.

We can help with admissions questions, portfolio review/course recommendations!